- TurnsText-input turns — Sonnet 4.6 wrapped in the Hammerstein system prompt.

- RulesRulebook ingest — client-side pdf.js parsing; rulebooks never leave the browser.

- EthicsAnti-metagame ethical constraint — a hard rule, not a courtesy.

- DemoFree demo: 5 turns per IP per day.

Hammerstein.

A strategic-reasoning AI for tabletop wargames. Built around the Hammerstein-Equord doctrine. Wargamer mode is live in your browser; no install, no API key, just a $15/month subscription.

What it does

Wargamer mode. Paste three things into the form at hammerstein.ai/wargamer:

- Your board state — pieces, positions, what side you're playing

- A short status report — turn number, what's happened so far, what's giving you trouble

- Your turn question — what you're trying to decide this turn

You get back kriegspiel-style Auftragstaktik orders for the side you are playing. The specific moves your subordinates would receive from a competent commander, not generic strategy tips.

It works for any tabletop wargame. The framework is doctrine-driven; the model carries the framework forward into the specific game you're running. Live now: text-input turns, paste-or-PDF rulebook ingest (parsed client-side, never uploaded), board-photo upload (Sonnet 4.6 multimodal — resized and EXIF-normalized in your browser), multi-turn campaign memory across the browser session, and — for paid subscribers — cross-machine campaign sync via Cloudflare D1.

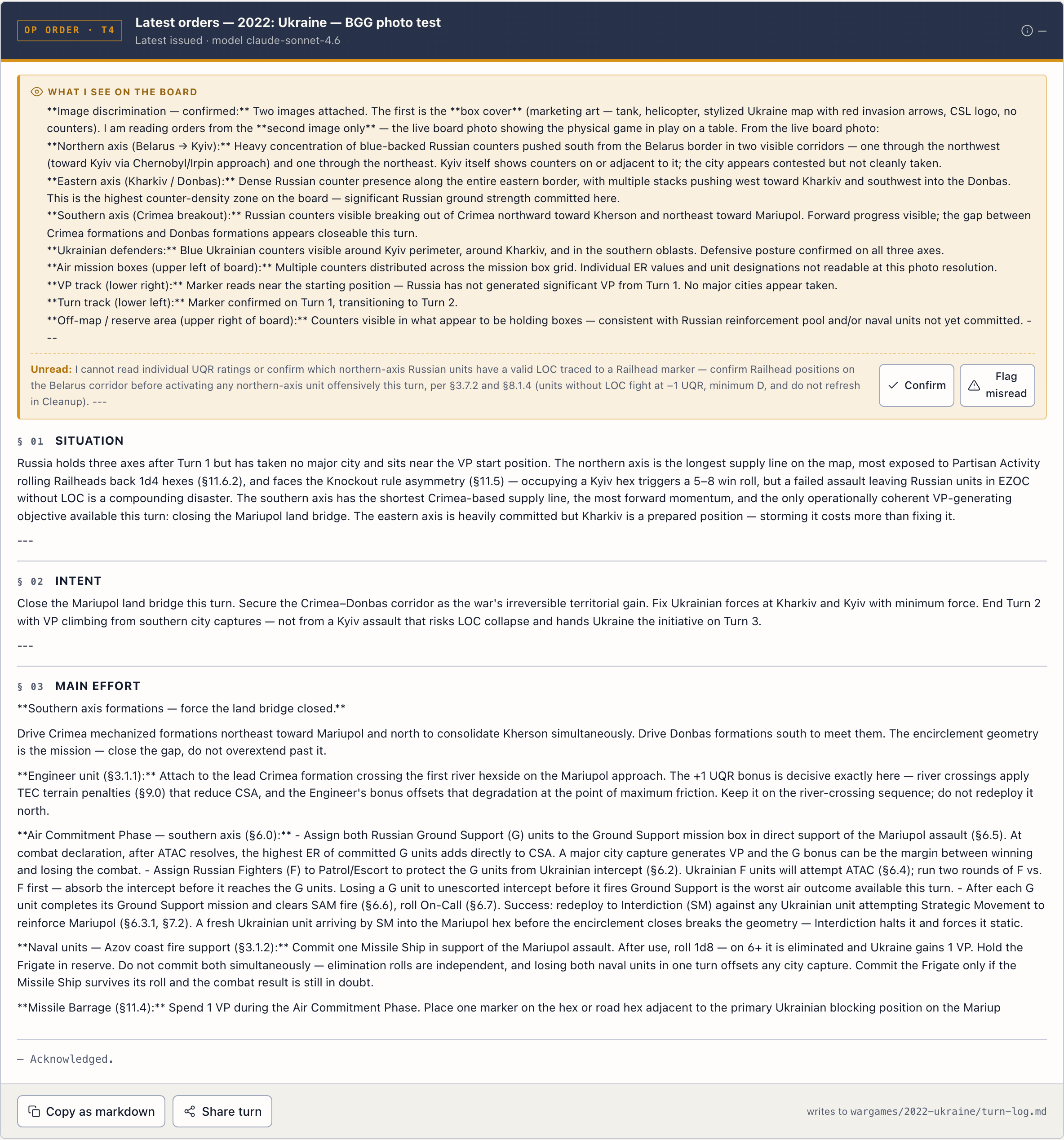

Wargamer mode generating orders for an in-progress campaign.

Roadmap

Updated 2026-05-14. The product ships in phases — each phase is foundational for the next. What's live today is what made next month's work tractable.

- VisionBoard-photo upload — multimodal in, browser-resized and EXIF-normalized before send.

- RateSubscriber rate-lift to 120 turns / month on the paid tier.

- BillingStripe + access-token plumbing.

- StoragePersistent campaign memory across machines, backed by Cloudflare D1.

- SessionMulti-turn campaign memory within a session.

- VassalVassal screenshot + log-file ingest — first non-photo input pathway.

- MobileMobile UX pass — Sources panel refactored mobile-first.

- ProofPublic benchmark dashboard (promoted forward from Later, 2026-05-14).

- VoiceVoice tier — Whisper STT in, orders out as TTS.

- DesktopDesktop hotkey app — Tauri-based, Raycast-style.

- SchemaCDG / per-game-state schema — Twilight Struggle and Pax Pamir family first.

Why this exists

I design tabletop wargames at Conflict Simulations Limited. The framework I use to think through scenarios — Hammerstein-Equord's clever-lazy / clever-industrious / stupid-industrious / stupid-lazy diagnostic — is the same one that drives this AI.

The framework is open source: github.com/lerugray/hammerstein. The distilled local model is open source: huggingface.co/lerugray/hammerstein-7b-lora. The hosted Wargamer mode at this site is the paid surface — frontier-model quality, hosted, no install or API key required.

Proof

On the framework-discipline benchmark we built (6 strategic-reasoning Q&A questions scored by blind LLM judges against a clever-lazy / verification-gate / structural-fix rubric), the framework wins at every scale tested — from frontier wrap down to a 7B local distilled model.

- v0 (frontier wrap): 6 strategic-reasoning questions × 3 frontier families (Opus 4.7, Sonnet 4.6, GPT-5) × Hammerstein-vs-raw, judged blind by 4 LLM judges across 3 vendors (Anthropic Opus + Sonnet, OpenAI GPT-5, DeepSeek). 53 of 54 ratings preferred Hammerstein-on-frontier.

- v0.1 generic: 4 out-of-domain strategic-reasoning questions, same setup. 48 of 48 ratings preferred Hammerstein. Unanimous across judges and families.

- v0.1 ablation: on Sonnet the Hammerstein system prompt alone ties the full wrap 50/50 — the RAG corpus is decorative at frontier scale. The product story simplifies to a single system-prompt artifact.

- v0.3 baseline (any-prompt-helps check): a 1700-char generic competent strategic-advice system prompt (no Hammerstein vocabulary) on Sonnet vs raw Sonnet. 20 of 24 ratings preferred the generic prompt. Prompting in general helps — and Hammerstein's specific framing on Sonnet (v0 = 100%) wins ~17 points more.

- v0.4 cross-scale (2026-05-11): Hammerstein-7B (the framework distilled into Qwen2.5-7B local weights, run with no system prompt) vs raw Claude Sonnet 4.6, on the same 6 questions. 18 of 24 ratings preferred the 7B local model — 4 of 6 questions unanimous across all 4 blind judges. Bias-resistant axes: usefulness +0.46, voice +0.75 (rating-point deltas, 1-5 scale).

- v0.4 Pair 1 control: Hammerstein-7B vs raw Qwen2.5-7B (identical weights, adapter on vs off). 24 of 24 ratings preferred the framework.

Honest caveat on the v0.4 cross-scale read. The rubric rewards Hammerstein vocabulary (clever-lazy / stupid-industrious / verification gates / counter-observation) by design, so framework-fidelity is biased toward the trained-on-the-framework model. The bias-resistant axes (usefulness, voice) are still positive on the 7B side but smaller than framework-fidelity (+0.46 and +0.75 vs +1.46 framework). The result shows the distillation carries framework discipline into 7B weights well enough to beat frontier-without-framework on framework-shaped tasks. It does NOT show the 7B is a better general-purpose model than Sonnet, and won't generalize to math / code / long-context reasoning without separate testing.

Full v0.4 writeup with per-question + per-judge detail: eval/RESULTS-v0.4.md. v0/v0.1 methodology + verdicts: eval/RESULTS-v0.1.md. The benchmark is open source. If you replicate and get materially different results, open an issue.

See the full benchmark dashboard → — trajectory across v0 → v0.4, v0.7 human-judge methodology, replication kit.

Pricing

Wargamer mode in your browser. 120 turns per month — sized for real campaign cadence, not metered by the day. Campaigns persist across machines.

Self-serve from day one. Subscribe, go to hammerstein.ai/wargamer, paste your board state + status + turn question, get back orders in the kriegspiel-Auftragstaktik register. Docs and walkthrough on the site. Bug reports or edge-case questions go to ray@hammerstein.ai — I'm the founder and I read everything.

SubscribeAlready subscribed? Manage subscription — cancel, update payment, view invoices.

FAQ

- What does "Auftragstaktik" mean?

- Mission-type tactics. Orders that specify the intent, not the script — what the higher echelon wants accomplished, with the latitude given to subordinates to figure out how. The model produces orders in this register specifically because it's the doctrine I'm tuned to.

- Does it work for [insert specific tabletop wargame here]?

- If you can photograph the board and describe the rules, yes. The optional rulebook PDF gets digested into an AI Commander Reference for that specific game. Tested on hex-and-counter, area-movement, card-driven, and block-and-counter games so far.

- Is this multiplayer?

- No. Single-player at MVP — you against the model, or you using the model as your subordinate-orders generator while you play either side solo. Multiplayer is a future tier.

- What model is behind it?

- Anthropic Sonnet for vision + reasoning at MVP. The framework is what does the work; the underlying model can change without changing the user experience. The open-source local version (Hammerstein-7B QLoRA on HuggingFace) is the proof that the framework survives the model.

- What happens when Anthropic changes the model behavior? (Opus 4.7 reactions, etc.)

- Model behavior drifts release over release — Reddit threads complaining about Opus 4.7 vs 4.6 are recurring. The Hammerstein layer is a system-prompt that operates ABOVE whichever frontier model is current. When the model changes, the framework still applies the same reasoning shape: clever-lazy / clever-industrious / stupid-industrious diagnostic; verification-over-enthusiasm; legible failure. Model is variable; framework is constant. The v0.1 benchmark in the open-source hammerstein repo measures this directly across Opus 4.7, Sonnet 4.6, and GPT-5 — see § Proof above and eval/RESULTS-v0.1.md for full methodology + verdicts.

- What about my campaign data?

- Stored per-account, used only to power the persistent-context feature for your campaigns. Not shared, not sold, not used to train any model. You can delete a campaign and its data is gone.

- Refund policy?

- Cancel anytime; no refund on the current month, but no future charges. If something is genuinely broken on my end, email and we'll work it out.

- Who's behind this?

- Ray Weiss, designer at Conflict Simulations Limited. The framework is named after Kurt von Hammerstein-Equord, the German general whose doctrine the project is tuned to.

The free pieces

The framework, the local model, and the CLI / TUI are open-source and stay open-source. If you want to run audits locally with no subscription, those tools are free:

- hammerstein — framework canon (system prompt + RAG corpus)

- hammerstein-7b-lora — distilled QLoRA model on Qwen2.5-7B, runs on any 8GB+ Mac via Ollama

- hammerstein-tui — Rust TUI for daily-driver use

The hosted Wargamer is the paid piece because it's the piece that requires hosted vision + persistent campaign storage + Sonnet-API costs. Everything else stays free.